Every enterprise runs on data and business logic. Customer information, product catalogs, pricing rules, inventory levels, order processing workflows, and thousands of other data elements and logical operations make up the actual substance of how the business works. Yet most enterprises treat this critical intellectual property as an implementation detail scattered across applications rather than as strategic capabilities deserving direct investment and governance.

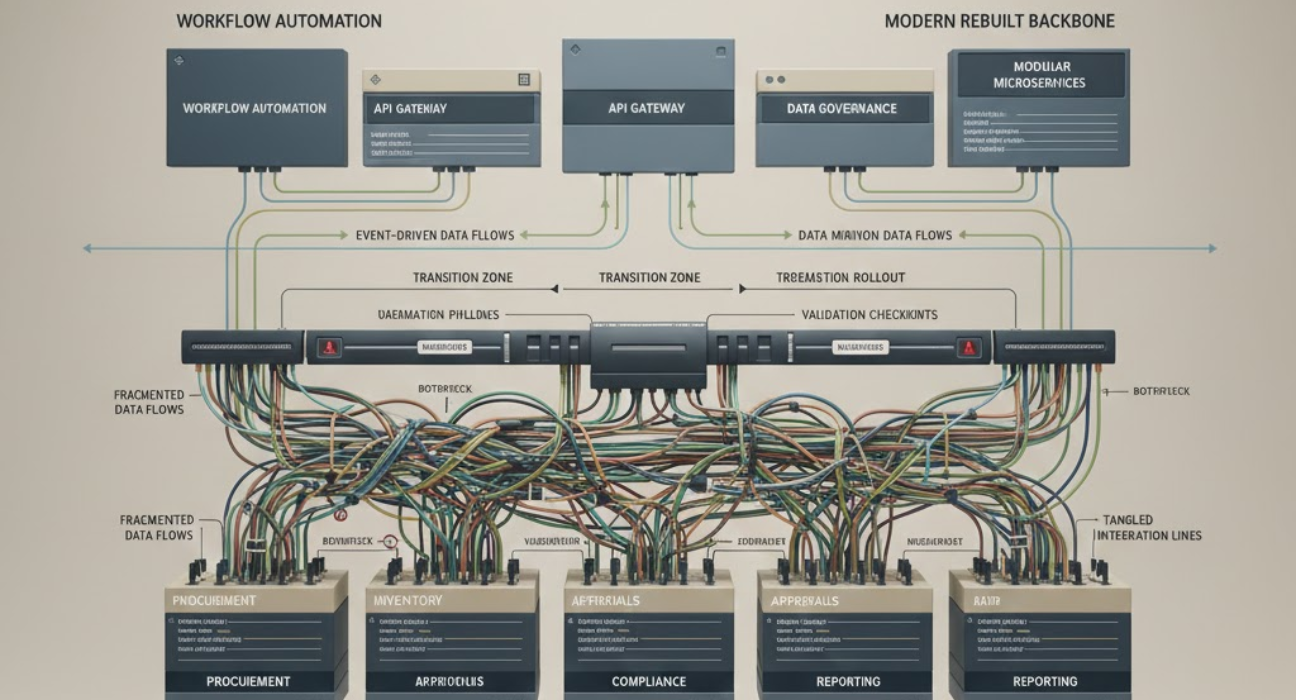

This creates real problems. Business logic gets duplicated across systems with subtle differences that cause inconsistent behavior. Data exists in multiple places with conflicting values. Rules that should be centrally managed are hardcoded in applications throughout the architecture. When business requirements change, updates need to happen in dozens of places, each increasing the risk of errors and inconsistencies.

The enterprises that recognize data and business logic as strategic capabilities and manage them accordingly gain substantial competitive advantages. They can adapt to market changes faster because logic is centralized and data is authoritative. They can launch new channels and services more quickly because the core capabilities are reusable. They reduce operational costs because they’re not constantly reconciling data inconsistencies or debugging logic discrepancies across systems.

The Problem with Application-Centric Architecture

Traditional enterprise architecture treats applications as the primary organizing principle. You have a CRM system, an ERP system, an e-commerce platform, and various other applications. Each owns its data and implements its business logic. Integration happens through point-to-point connections or middleware that moves data between systems.

This model worked acceptably when enterprises had fewer applications and simpler integration needs. It breaks down at modern enterprise scale, where you might have hundreds of applications that need to share data and coordinate business processes.

Consider customer data. The CRM system has customer contact information and interaction history. The e-commerce platform has purchase history and browsing behavior. The support system has case information and satisfaction scores. The marketing platform has campaign responses and segment assignments. The finance system has a payment history and credit terms. Each system maintains its own version of customer data, and these versions inevitably diverge.

When a customer updates their email address in one system, how long does it take to propagate everywhere? When you need a complete view of a customer for a business decision, which system has the authoritative data? When data conflicts between systems, which version is correct? Most enterprises don’t have clear answers to these questions, so they deal with data inconsistency problems reactively as they arise.

Business logic duplication creates similar problems. Pricing logic might exist in the e-commerce platform, the order management system, the quote generation tool, and the billing system. Each implementation handles edge cases slightly differently. When pricing rules change, all four systems need updates, and keeping them consistent is difficult. Customers notice when they see different prices in different places or when quotes don’t match actual charges.

The Cost of Distributed Logic

Distributed business logic imposes costs that compound over time. Development teams waste effort reimplementing the same logic in different systems. Testing becomes complex because you need to verify consistent behavior across multiple implementations. Changes are risky because a mistake in any implementation can cause incorrect business outcomes.

The operational impact is substantial. Support teams spend time investigating why different systems show different results. Finance teams reconcile discrepancies caused by inconsistent logic. Customers get frustrated with inconsistent experiences. And opportunities get missed because launching new capabilities requires coordinating logic changes across many systems.

Many enterprises have attempted to solve this through service-oriented architecture or microservices, creating centralized services for common business capabilities. This helps when done well, but often just shifts the problem. Now, instead of logic duplication, you have complex service dependencies and integration challenges. And if the services weren’t designed with proper abstractions, you still end up with logic scattered across multiple services.

The better solution is treating business logic as a strategic capability that deserves architectural focus. This means identifying the core business rules and processes that drive the enterprise, extracting them from application-specific implementations, and creating reusable, well-governed capabilities that all systems can leverage.

Data as a Strategic Asset

Most enterprises understand that data is valuable, but they treat it primarily as a byproduct of applications rather than as a strategic asset requiring direct management. Data gets created and stored wherever applications need it, with little consideration for reusability, quality, or governance.

This approach creates data problems that affect everything the enterprise does. Analytics reports show different numbers depending on which system sourced the data. Customer experiences are inconsistent because different channels use different data. Business decisions are delayed while teams reconcile conflicting information. And opportunities for data-driven innovation are limited because nobody trusts the data quality.

Treating data as a strategic asset means several things. First, clear ownership and governance so there’s no ambiguity about authoritative sources. Second, active data quality management rather than accepting whatever quality applications happen to produce. Third, architecture that makes data accessible where it’s needed without constant point-to-point integration. Fourth, security and privacy controls that are consistent across the enterprise rather than implemented differently by each application.

This requires investment in data platforms, governance processes, and organizational capabilities. It means building systems that separate data management from application logic so data can be accessed by multiple applications without tight coupling. It means establishing data standards and enforcing them. And it means measuring and improving data quality as an ongoing operational concern.

The return on this investment shows up throughout the organization. Development teams move faster because they can access reliable data through well-defined interfaces. Business teams make better decisions because they trust the data. Customer experiences improve because data is consistent across channels. And new capabilities can be built more quickly because the data foundation is solid.

The Governance Challenge

Managing data and logic as strategic capabilities requires governance that most enterprises lack. Who decides what business logic should be centralized versus distributed? Who owns data quality for customer information? Who approves changes to core business rules? Who ensures consistency across systems?

Without clear governance, these decisions get made implicitly through individual project choices. Each development team decides how to implement pricing logic based on its immediate needs. Each application team manages data quality according to its own standards. The result is fragmentation and inconsistency.

Good governance doesn’t mean centralized control over everything. It means clear decision rights, established standards, and processes for handling exceptions. Some decisions need centralized authority because consistency is critical. Others can be delegated because local optimization is more valuable than global consistency.

The challenge is establishing effective governance without being bureaucratic. Too much governance slows everything down and creates friction that teams work around. Too little governance leads to the fragmentation and inconsistency that created the need for governance in the first place.

The enterprises that get this right have governance models that focus on outcomes rather than process compliance. They measure data quality and logic consistency rather than just checking that proper procedures were followed. They invest in making the right choices easy through good tooling and clear patterns rather than relying on extensive review and approval.

Making Logic and Data Reusable

The value of treating data and logic as strategic capabilities comes from reusability. When business logic is centralized and well-defined, new applications can leverage it without reimplementation. When data is accessible through proper interfaces, new capabilities can be built without complex integration projects.

Achieving this reusability requires architectural discipline. Business logic needs clear abstractions that separate the essential rules from the application-specific details. Data needs to be accessible through APIs that hide implementation details and can evolve without breaking consumers. And both need proper versioning and lifecycle management so changes can be made safely.

Many enterprises struggle with reusability because their existing systems weren’t designed for it. Logic is tightly coupled to specific applications. Data is stored in formats optimized for particular use cases. Creating reusable capabilities requires refactoring these systems, which is expensive and carries risk.

The pragmatic approach is to build reusability incrementally. When new capabilities are needed, build them as reusable services rather than application-specific implementations. When existing logic needs modification, consider extracting it into a reusable form. When data quality problems arise, fix them in ways that benefit multiple consumers rather than just the immediate need.

Over time, this accumulates into a portfolio of reusable capabilities that accelerate development and improve consistency. The investment pays returns through faster delivery, lower maintenance costs, and better business outcomes.

AI and Automation Opportunities

When data and business logic are properly managed as strategic capabilities, they create opportunities for AI and automation that would otherwise be impossible or impractical.

Machine learning models need high-quality training data. If your data is fragmented across systems with inconsistent quality, building effective models is difficult. When data is well-governed and accessible, training and deploying models become straightforward.

Process automation requires clear business logic that can be encoded reliably. If logic is scattered across applications with subtle variations, automation is risky because you can’t be confident the automated behavior matches intended business rules. When logic is centralized and well-defined, automation becomes safer and more valuable.

The enterprises getting real value from AI aren’t necessarily those with the most sophisticated algorithms. They’re the ones with data and logic organized in ways that make AI and automation practical. They’ve invested in the foundations that enable advanced capabilities rather than trying to build advanced capabilities on inadequate foundations.

How Ozrit Approaches Data and Logic Programs

At Ozrit, we’ve helped enterprises transform data and business logic from scattered implementation details into strategic capabilities. Our approach recognizes that this transformation is as much organizational as technical.

We start with an assessment that identifies where critical data and logic exist, how they’re currently managed, and what problems this creates. This goes beyond cataloging systems. We examine actual data quality, trace business logic implementations across applications, understand governance structures, and quantify the operational impact of current approaches. This assessment typically takes four to six weeks and involves our senior architects working across your organization.

Based on this assessment, we develop a transformation roadmap that prioritizes capabilities by business value and technical feasibility. Rather than attempting to centralize everything at once, we focus on the data and logic that matter most to business outcomes and cause the most operational problems when inconsistent.

We structure the work in phases that deliver measurable improvements within three to six months. Early phases typically focus on high-value use cases that demonstrate the benefits of centralized data and logic. Later phases expand coverage and build more sophisticated governance and management capabilities.

Each program has a dedicated senior delivery lead from our team who owns the execution and outcomes. This person coordinates technical work, manages organizational change, and ensures progress toward strategic capability goals. They’re experienced architects who understand both the technical patterns and the organizational challenges involved in this type of transformation.

Our onboarding process gets teams productive quickly on data and logic work. We assign engineers from our team of 250+ developers and specialists who have experience building data platforms, extracting business logic, and implementing governance frameworks. They spend their first two to three weeks understanding your data landscape, business rules, and organizational context. By week four, they’re contributing to transformation work.

We handle the complex technical work these programs require. Building data platforms that serve multiple consumers. Extracting business logic from applications into reusable services. Implementing governance frameworks that are effective without being bureaucratic. Migrating systems to use centralized capabilities without disrupting operations.

Because we operate across time zones with 24/7 coverage, we can maintain momentum on transformation programs and provide ongoing support for the new capabilities we build. Data and logic platforms need to be highly reliable because many systems depend on them, and having experienced teams available continuously provides the operational support these critical capabilities require.

The Competitive Advantage

Enterprises that manage data and business logic as strategic capabilities can move faster than competitors still dealing with fragmented implementations. They can launch new products and channels in months rather than years because the foundational capabilities are reusable. They can adapt to market changes quickly because logic is centralized and can be updated consistently. They can make better decisions because their data is reliable and accessible.

This advantage compounds over time. Each new capability builds on previous investments rather than starting from scratch. Each improvement benefits multiple systems rather than just one. And the gap widens as competitors continue struggling with data inconsistency and logic duplication while you’re building on solid foundations.

The question isn’t whether data and business logic are important. Every enterprise leader understands they are. The question is whether they’re managed as strategic capabilities deserving direct investment and governance, or treated as implementation details that emerge from application development. The enterprises making the first choice are building competitive advantages that are difficult for others to replicate.